Data Fabric

What is a data fabric?

A unified view of all data assets regardless of where they reside or how they are accessed

A data fabric facilitates the coming together of various data environments through the use of a holistic or unified architecture design. A fabric model is designed to simplify data access in an organization allowing for easier self-service data access and consumption across the enterprise.

The goal of a data fabric is to provide a unified view of data, making it easily accessible and usable for various applications and users, including:

Most often data fabrics are used for analytical purposes but these practices can also be expanded to operational data.

Data fabric In action

Extending a data fabric to operational data also allows for more efficient data management and data processing as it allows for the automation of data access and transformation.

Adopting an operational data fabric architecture approach can bring several distinct advantages to an enterprise.

Enable holistic, data-centric decision-making

Improve data accessibility

Strengthen security and privacy measures

Eliminate data silos

Enable integration of new data sources

Data fabrics become increasingly important as the volume and complexity of data continues to grow and businesses need more efficient and effective ways to manage and utilize their data.

Data must be easily accessible to those who need it, rather than being locked away or scattered in various locations.

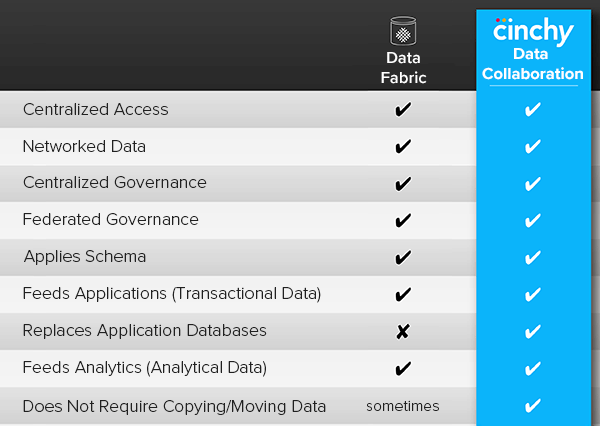

Data collaboration goes beyond data fabric

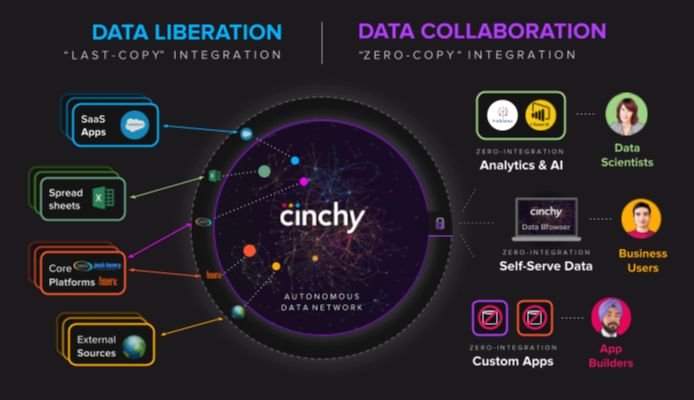

Data Collaboration amplifies data management by utilizing an underlying data fabric approach and methodology with a re-definition and extension of the boundaries of how application and data interact.

A Data Collaboration Platform is inherently a network of data sets, organized through an operational data fabric approach.

Systems and applications are connected to the operational fabric, liberating the data on the network where it is now available to any application or person who has been granted access to it.

Data Collaboration eliminates the need for application data integration making it easier to access and change data from multiple sources within a single self-serve interface.

“Data Collaboration actually acts as a data fabric for applications that are not natively built using the data set network in the place of an operational database. A traditional data fabric will always require applications to have their own databases that connect to the network, however, while dataware allows developers to build new applications without dedicated operational databases.”

Eckerson Group, The Rise of Data Collaboration

Data Collaboration as an architecture design has several core components, including:

This allows for real-time and always-up-to-date access to all data on the network, regardless of where it is stored or how it is connected.

Watch more on data collaboration

See Data Collaboration in action!

Connected data without the effort, time, and cost of traditional data integration.